Unity Catalog - the Unified Self

How Databricks Unity Catalog Mirrors the Advaitic Vision of One Undivided Reality

By Karthik Darbha | Tech4Nirvana

The Problem of Many Selves

In the Advaita Vedanta tradition, the root cause of all suffering is Avidya (अविद्या) — ignorance. Not ignorance in the ordinary sense of not knowing facts, but a more fundamental confusion: the mistaking of the many for the one.

The individual soul — Jivatman — believes itself to be separate, bounded, and independent. It clings to its uniqueness, defends its boundaries, and experiences the world as a collection of distinct, competing objects. This is the delusion that Advaita seeks to dissolve — not through argument alone, but through direct recognition: there is only one reality, and that reality is Brahman.

I have been a data engineer for over two decades. I have worked in Healthcare, Pharma, Financial Services, and Retail. I have seen data architectures built with enormous care and technical sophistication fail — not because the tools were wrong, but because the underlying philosophy was fragmented.

And I have come to believe that most of these failures are not technical failures at all. They are philosophical ones. They are the failures of a mind that sees separation where there is unity, multiplicity where there is one source.

Advaita Vedanta — the philosophy of non-duality — offers a lens that cuts through this complexity with a clarity I have found nowhere else in the technical literature. This post is my attempt to make that connection explicit.

A note on Sanskrit terms: This article draws on several concepts from Advaita Vedanta. Each term is defined on first use, but for quick reference: Brahman (ultimate reality), Maya (appearance/illusion), Avidya (ignorance), Adhikara (qualification/eligibility), Pratibimba (reflection), Viveka (discriminative wisdom), Neti Neti (not this, not this — iterative negation), Dharma (right action in context), Tat tvam asi (Thou art That — the identity of self and ultimate reality). No prior knowledge of Vedanta is required to follow the technical argument.

I. Brahman and the Lakehouse: The One Source of Truth

The central claim of Advaita Vedanta, articulated most powerfully by Adi Shankaracharya in the eighth century, is deceptively simple: there is only one reality — Brahman. Everything we perceive as separate — the chair, the tree, your thoughts, my words — is a modification of this one undivided ground. The apparent multiplicity of the world is Maya, the appearance of difference superimposed upon unity.

Now consider the defining problem of enterprise data architecture: the proliferation of truth.

Every department maintains its own definition of a customer. Sales counts by active accounts. Finance counts by billing entities. Marketing counts by email subscriptions. The data warehouse has a customers table. The CRM has another. The data lake has three more. Each one is confidently called the source of truth, and none of them agree.

In Vedantic terms, this is precisely the confusion of Maya — mistaking the modifications for the ground, the shadows on the wall for the light itself.

The Lakehouse architecture — and more specifically, the Unity Catalog pattern in Databricks — is, philosophically, an attempt to establish Brahman in the data estate. One catalog. One lineage. One governed source from which all downstream consumption derives. Not many truths dressed up as one, but a single ontological ground from which all analytical perspectives emerge as views.

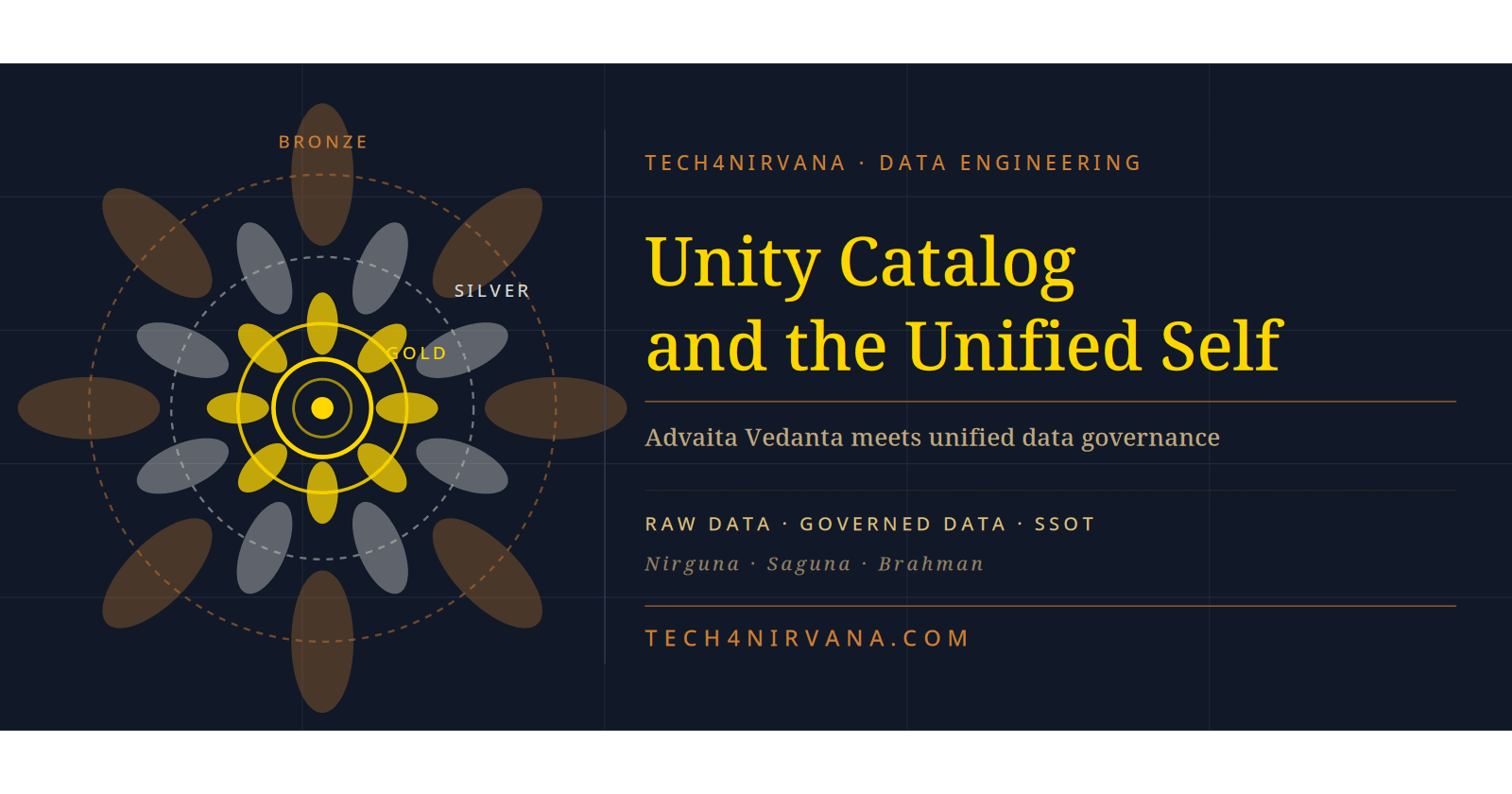

When I first encountered Unity Catalog, my Vedantic instinct recognised it immediately: this is the architecture of non-duality made operational. The Bronze layer is the unmanifest — raw, unprocessed, the Nirguna Brahman (Brahman without attributes). Silver is the first differentiation, cleansed and conformed. Gold is Saguna Brahman — Brahman with attributes, ready to be perceived and used by the world. The medallion architecture is not just a data pattern. It is a cosmology.

II. Maya and the Schema: Why Data Is Always an Approximation

One of Vedanta's subtler insights is that Maya is not illusion in the sense of falsehood. The world is not false. It is a real appearance — a functional reality that operates perfectly within its own domain, even if it is not the whole story. Your coffee cup is real for the purposes of drinking coffee. But at a deeper level, it is mostly empty space and probabilistic quantum fields.

Data engineers experience this tension every day, though few name it.

Every schema is Maya. Every data model is an approximation — a useful fiction that captures reality adequately while inevitably leaving out angles that will matter to someone, somewhere, at some future point.

A data model is not reality. It is a perspective on reality. Advaita calls this vivartavada — the appearance of transformation. The rope that appears as a snake. The schema that appears as a business.

Schema evolution is not a technical problem — it is an epistemological one. The schema must always change because our understanding of the business deepens. Fighting schema change is fighting the nature of knowledge itself.

Delta Lake's schema evolution features, the MERGE INTO pattern, the CLONE operations — these are not convenience features alone. They are a structural acknowledgment that every model is temporary. They are the data engineer's Neti Neti (नेति नेति) — not this, not this — iteratively approaching truth without ever claiming to have finally captured it.

-- Schema evolution: acknowledging that the model is never final

ALTER TABLE silver.customers ADD COLUMNS (

preferred_channel STRING,

lifetime_value_band STRING

);

-- MERGE INTO: reconciling multiple partial truths into one governed table

MERGE INTO silver.customers AS target

USING (

SELECT customer_id, email, preferred_channel

FROM bronze.raw_crm_events

WHERE event_date = current_date()

) AS source

ON target.customer_id = source.customer_id

WHEN MATCHED THEN UPDATE SET *

WHEN NOT MATCHED THEN INSERT *;

The Unity Catalog metastore records every one of these changes — not as failures, but as the natural evolution of understanding. The lineage graph in Unity Catalog is, philosophically, a record of Viveka — discriminative wisdom — applied iteratively over time.

III. Adhikara: Why Not Everyone Should See Everything

Advaita Vedanta has a concept that is often misunderstood by those unfamiliar with the tradition: Adhikara (अधिकार) — qualification or eligibility. The deepest teachings of non-duality are not presented indiscriminately to every seeker. There is a gradation — a recognition that different levels of inquiry require different levels of preparation, and that premature exposure to the highest teachings can confuse rather than illuminate.

This is not elitism. It is epistemological honesty.

The data governance challenge in regulated industries — Healthcare, Financial Services, Pharma — is precisely an Adhikara problem. Not because the data is being hidden maliciously, but because different roles have different legitimate needs and different levels of qualification to handle sensitive information responsibly.

The HIPAA-compliant healthcare data platform does not expose raw patient records to every analyst. The financial platform does not expose individual transaction details to every business user. Adhikara — qualification — determines access.

Unity Catalog operationalises Adhikara with surgical precision:

-- Column masking: the data exists, but its form is appropriate to the viewer

CREATE FUNCTION mask_ssn(ssn STRING)

RETURNS STRING

RETURN IF(IS_MEMBER('pii-approved-analysts'), ssn, 'XXX-XX-' || RIGHT(ssn, 4));

ALTER TABLE silver.patients ALTER COLUMN ssn

SET MASK mask_ssn;

-- Row-level security: each user sees only the universe they are qualified to see

CREATE ROW FILTER region_filter ON gold.patient_outcomes

USING (analyst_region = current_user_region());

-- Fine-grained GRANT: Adhikara encoded as permissions

GRANT SELECT ON TABLE gold.patient_outcomes

TO `clinical-analytics-team`;

REVOKE SELECT ON TABLE silver.raw_claims

FROM `business-analysts`;

The beauty of Unity Catalog's approach is that the data is not duplicated for different access levels. The same underlying reality — Brahman, if you will — is presented in forms appropriate to the qualification of each observer. The senior data engineer sees the full raw record. The business analyst sees the governed, masked, aggregated view. The external partner sees only the Delta Shared subset. One reality, multiple valid perspectives, each appropriate to its Adhikara.

IV. Delta Sharing as Vasudhaiva Kutumbakam

The ancient Sanskrit principle Vasudhaiva Kutumbakam (वसुधैव कुटुम्बकम्) — the world is one family — expresses the Advaitic insight that boundaries between self and other are ultimately illusory. At the deepest level, we are all one.

Unity Catalog's Delta Sharing protocol is Vasudhaiva Kutumbakam for the data ecosystem.

Delta Sharing allows you to share live, governed data across:

Organisational boundaries (share with partners, vendors, customers)

Cloud boundaries (share from Azure to AWS to GCP)

Platform boundaries (share with non-Databricks consumers)

No data copying. No replication. No loss of governance. The data remains in one place — governed by one Unity Catalog — but its benefits are shared across the whole family of consumers.

# Delta Sharing: one governed source, shared with the whole family

import delta_sharing

# The recipient needs only a profile file — no platform dependency

client = delta_sharing.SharingClient("config.share")

# Access the shared table — from any platform, any cloud

df = delta_sharing.load_as_pandas(

"config.share#partner_share.gold.aggregated_outcomes"

)

The philosophical alignment is precise: Delta Sharing does not dissolve the boundaries of governance (the organisations remain distinct, as Jivatmans remain apparently distinct). But it recognises the underlying unity — the shared data reality — and enables participation in that unity without demanding merger. This is exactly the Advaitic position: the apparent multiplicity is real at the vyavaharika (conventional) level, but the underlying unity is the paramarthika (ultimate) truth.

V. The Pratibimba: Reflection Without Separation

One of the most beautiful concepts in Advaita Vedanta is Pratibimba (प्रतिबिम्ब) — the reflection. When Brahman appears as the individual soul, it is like the sun reflected in a pot of water. The reflection is real — it illuminates, it warms, it functions. But it is not separate from the original sun. When the pot is broken (when Avidya is dissolved), the reflection merges back into the original.

Unity Catalog's views and materialised views are Pratibimba — reflections of the underlying data reality.

A Gold table in the serving layer is a reflection of the Silver tables below it, which are reflections of the Bronze tables below them, which are reflections of the source systems at the root. Each layer is a real, functional, useful representation. But none of them is the ultimate truth — they are all expressions of the underlying data reality, governed and unified through the one Catalog.

-- A materialised view is Pratibimba: real, functional, but not the source

CREATE MATERIALIZED VIEW gold.customer_ltv_summary

COMMENT 'Reflection of silver.transactions and silver.customers'

AS

SELECT

c.customer_id,

c.segment,

SUM(t.transaction_value) AS lifetime_value,

COUNT(t.transaction_id) AS total_transactions,

MAX(t.transaction_date) AS last_activity_date

FROM silver.customers c

JOIN silver.transactions t ON c.customer_id = t.customer_id

GROUP BY c.customer_id, c.segment;

Unity Catalog's data lineage automatically tracks these Pratibimba relationships — recording which views depend on which tables, which downstream models derive from which upstream sources. The lineage graph is the map of Pratibimba across the entire data estate.

Implementing the Unified Self: A Practical Migration Path

Recognising the Advaitic truth of Unity Catalog is one thing. Migrating from the world of siloed Hive Metastores to unified governance is another.

Here is a migration framework I have applied in regulated environments:

Phase 1 — Inventory (Sravana: listening)

# Enumerate the current fragmented reality

from pyspark.sql import SparkSession

spark = SparkSession.builder.getOrCreate()

# List all databases in Hive Metastore

databases = spark.sql("SHOW DATABASES").collect()

for db in databases:

tables = spark.sql(f"SHOW TABLES IN {db.databaseName}").collect()

print(f"Database: {db.databaseName} | Tables: {len(tables)}")

Phase 2 — Classify (Manana: reflection)

# Classify tables by sensitivity before migrating

# Not all data has the same Adhikara requirements

sensitivity_map = {

"bronze.raw_patient_events": "PII_HIGH",

"silver.patient_demographics": "PII_MEDIUM",

"gold.aggregated_outcomes": "PUBLIC_INTERNAL"

}

Phase 3 — Migrate and Govern (Nididhyasana: realisation)

-- Upgrade to Unity Catalog namespace

CREATE CATALOG IF NOT EXISTS prod_healthcare;

CREATE SCHEMA IF NOT EXISTS prod_healthcare.silver;

-- Migrate with governance from day one

CREATE TABLE prod_healthcare.silver.patients

LOCATION 'abfss://silver@yourstorage.dfs.core.windows.net/patients'

AS SELECT * FROM hive_metastore.legacy_db.patients;

-- Apply Adhikara immediately

GRANT SELECT ON TABLE prod_healthcare.silver.patients

TO `clinical-data-scientists`;

The three phases map directly to the Vedantic path of Sravana (hearing/understanding), Manana (deep reflection), and Nididhyasana (direct realisation). You cannot skip phases. The organisation that tries to govern without first understanding what it has will fail, just as the seeker who claims realisation without genuine enquiry is merely performing wisdom.

A note on migration realism. The framework above is conceptually clean. Real migrations are not. In practice, expect friction at four points:

Workspace attachment sequencing — A Unity Catalog metastore is regional and account-scoped. Attaching multiple workspaces to the same metastore must be planned carefully; workspaces previously using different Hive Metastores will have namespace collisions that require manual resolution before migration proceeds.

External location conflicts — Tables created in Hive Metastore with

LOCATIONpointing to ADLS Gen2 paths need those paths registered as External Locations in Unity Catalog before they can be referenced. Unregistered paths will causePERMISSION_DENIEDerrors that are not always immediately obvious in their root cause.HMS sync and managed table ownership — Managed tables in the legacy Hive Metastore are owned by the workspace; after migration, Unity Catalog requires explicit ownership assignment at catalog, schema, and table levels. Missing this step leads to silent governance gaps where tables exist but have no effective steward.

Privilege inheritance gaps — Unity Catalog does not automatically inherit Hive Metastore ACLs. Every permission must be explicitly re-granted. In regulated environments, this is a compliance event, not just a technical step — it should be logged, reviewed, and signed off.

None of these friction points invalidate the framework. But acknowledging them is part of Manana — honest reflection on what the path actually involves, not just what it looks like on a whiteboard.

The Unified Self in Production

When Unity Catalog is implemented with integrity — when the three-level namespace is consistently applied, when Adhikara is encoded at the column level, when Delta Sharing enables Vasudhaiva Kutumbakam with partners — something remarkable happens.

The data estate stops feeling like a collection of separate systems and begins to feel like a single, coherent intelligence. Analysts from different teams can trust each other's data because they share a common governance layer. Engineers spend less time negotiating access and more time building insight. The organisation stops managing multiplicity and starts experiencing unity.

This is not a metaphor. It is a measurable operational outcome.

But the Vedantic framing adds something that the purely technical framing misses: it reminds us why this matters. The fragmentation of data is not just a technical debt problem. It is a reflection of a fragmented organisational mind — a mind that has forgotten its own unity and is experiencing the suffering of Maya.

Unity Catalog does not just solve a technical problem. It is an invitation to a different way of thinking — one where the data estate is understood as a unified whole, where governance is understood as Dharma (right action in context), and where the role of the data engineer is not just to move bytes but to establish clarity where confusion reigns.

Tat tvam asi — Thou art That. The data and the business are not separate. The engineer and the organisation are not separate. The governance layer and the governed data are not separate. When this is truly understood — not as a slogan but as a lived architectural principle — the unified self emerges in production.*

A Note on Tools vs. Principles

This article uses Databricks Unity Catalog as its primary example — deliberately, because it is the most complete implementation of unified data governance available today on a cloud lakehouse platform. But the philosophical principles are not Databricks-specific.

The same Advaitic framework applies to any serious data governance implementation: Apache Atlas for metadata management, AWS Glue Data Catalog for AWS-native estates, Microsoft Purview for Azure-wide governance, or a Data Mesh architecture where federated computational governance replaces centralised control. The specific tool enforces the principle; it does not originate it.

What Unity Catalog offers is a particularly coherent operationalisation of the non-dual ideal — one catalog, one lineage, one governed ground. If your organisation uses a different stack, the question to ask is the same: does your governance layer establish one source of ontological truth aka Single Source of Truth (SSOT), or does it manage the proliferation of many? The answer determines whether your architecture reflects Brahman or perpetuates Maya — regardless of the vendor logo on the dashboard.

Conclusion

Unity Catalog is, technically, a centralised metadata and governance layer for Databricks workspaces. It solves real problems: cross-workspace data sharing, fine-grained access control, lineage tracking, and audit compliance.

But at a deeper level, it is an architectural expression of Advaitic wisdom: the recognition that what appears as many is, at its root, one — and that the role of good architecture, like the role of good philosophy, is to make that unity visible, governable, and available to all who are qualified to receive it.

Build with Unity Catalog. Build with unity.

Karthik Darbha is a Senior Data Engineering & AI Leader with 23 years of professional experience, including 20+ years building enterprise data platforms across Healthcare, Pharma, Retail, Insurance, and Financial Services.